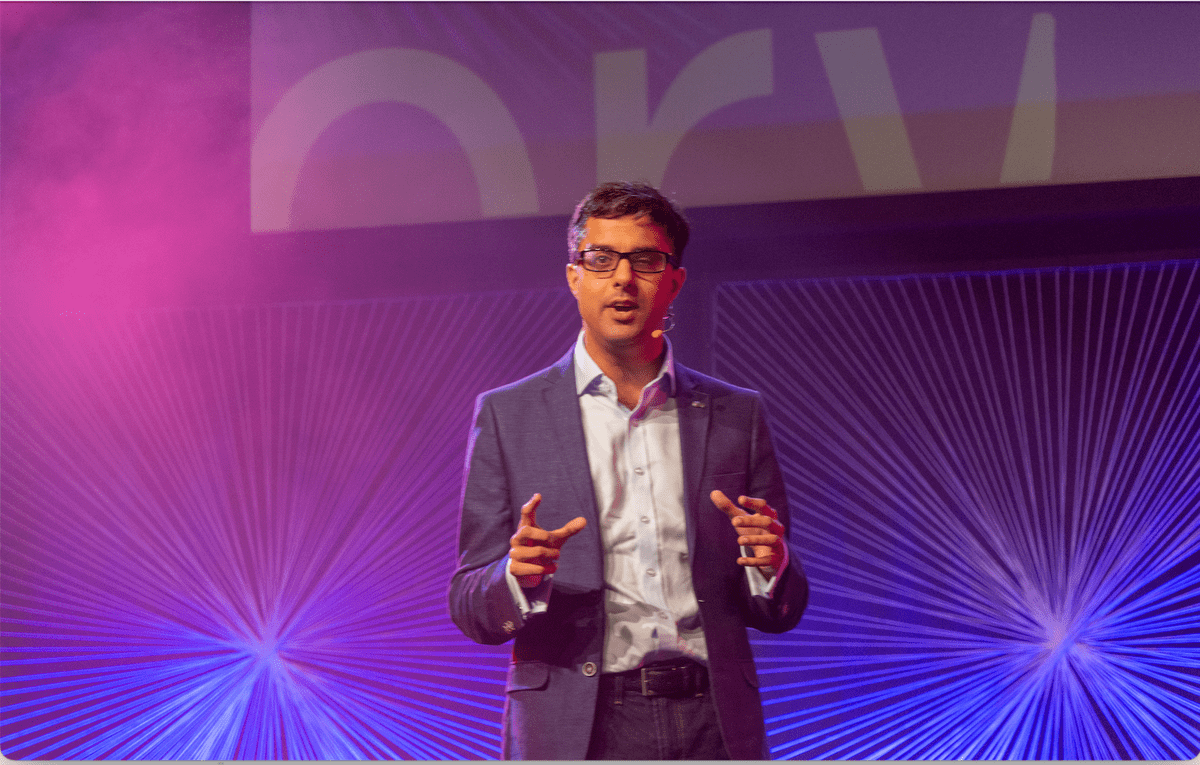

Junaid Mubeen

Mathematician turned educator, writer and speaker.

Junaid Mubeen

Mathematician turned educator, writer and speaker.

Junaid Mubeen

Mathematician turned educator, writer and speaker.

At the nexus of mathematics, education and AI

Exploring the impact of AI on how we think, work and live.

Over a decade of EdTech leadership and consulting experience.

Graduate of Oxford (MMath, DPhil) and Harvard (Ed.M).

Speaking

A selection of past talks. Could I be a speaker for you? Get in touch if so!

Mathematical Intelligence: What we have that machines don't

There's so much talk about the threat posed by intelligent machines that it sometimes seems as though we should surrender to our robot overlords now. But I'm not ready to throw in the towel just yet. We have the edge over machines because of a remarkable system of thought developed over the millennia. It's familiar to us all, but often badly taught and misrepresented in popular discourse - maths. In this book I identify seven areas of intelligence where humans can retain a crucial edge. This is mathematics as you've never seen it before!

- Get in touch

I am open to workshops and speaking engagements for schools and organisations looking to unlock the potential of mathematics and/or Artificial Intelligence.